Software A/B testing is the antidote to hope marketing.

It’s a systematic, data-driven method for figuring out what actually works by showing different versions of an ad or landing page to different groups of people and letting the numbers decide the winner. No opinions, just results.

Stop guessing and start winning

Let’s be honest. Most PPC marketing is just expensive guesswork. You tweak a headline, change a CTA, and hope for the best. That’s not a strategy; it’s a prayer. It's also how you burn cash without ever really knowing why.

Imagine you have two ideas for an ad headline. Instead of debating which one feels better in a meeting, you run a simple test: show Version A to one group of people and Version B to another. The software then tracks which version gets more conversions, clicks, or whatever your goal is.

It’s a direct cage match between your ideas where the data picks the winner. No ego, no opinions—just cold, hard results.

The end of 'I think' marketing

This process fundamentally changes how you make decisions. It pulls your team away from subjective arguments and toward objective proof. Suddenly, the highest-paid person's opinion becomes a lot less important than what the customer actually does.

This data-driven approach builds a culture of learning where even the most strongly held beliefs can be challenged by real evidence. To truly stop guessing, you have to get good at advertising effectiveness measurement for all your campaigns. It’s a core skill for anyone who is serious about performance.

From manual tweaks to automated wins

For years, A/B testing was a manual, clunky process. Setting up tests was a time-consuming headache, and trying to scale them across a large Google Ads account was practically impossible.

Now, the game is changing. Modern software ab testing platforms automate this entire workflow, letting you move from slow, one-off tests to continuous optimization.

Here’s a look at how the old, manual way compares to a modern, automated approach.

Manual vs. automated A/B testing: a quick breakdown

The difference isn't just about speed; it's about what becomes possible when you remove the manual bottlenecks.

| Aspect | Manual A/B Testing | Automated A/B Testing |

|---|---|---|

| Scale | Limited to a few high-traffic campaigns; impossible to manage at scale. | Can test thousands of variations across every keyword and ad group. |

| Speed | Slow setup and long run times to get meaningful data on a few tests. | Launches tests instantly and gets results faster with dynamic traffic allocation. |

| Optimization | Periodic improvements based on one-off tests. | Continuous, real-time optimization that compounds gains over time. |

| Effort | High manual effort required from a dedicated person or team. | Low-touch; the system handles test creation, monitoring, and implementation. |

This is how you stop wasting your budget on underperforming campaigns and start making decisions that demonstrably grow your business. In the hyper-competitive world of Google Ads, automation isn't a luxury—it's the only way to do A/B testing right at scale.

the core engine of effective a/b testing software

Not all A/B testing software is created equal. Let’s be real, a lot of tools out there are clunky, overpriced, or just plain ineffective. They promise the world but deliver a headache. If you’re serious about getting real results, you need a tool with a solid engine built on a few core, non-negotiable components.

Think of it like building a high-performance race car. You wouldn't just throw any old parts together and expect to win. You need a powerful engine, a responsive steering system, a reliable transmission, and a dashboard that gives you the right data at the right time. A/B testing software is no different.

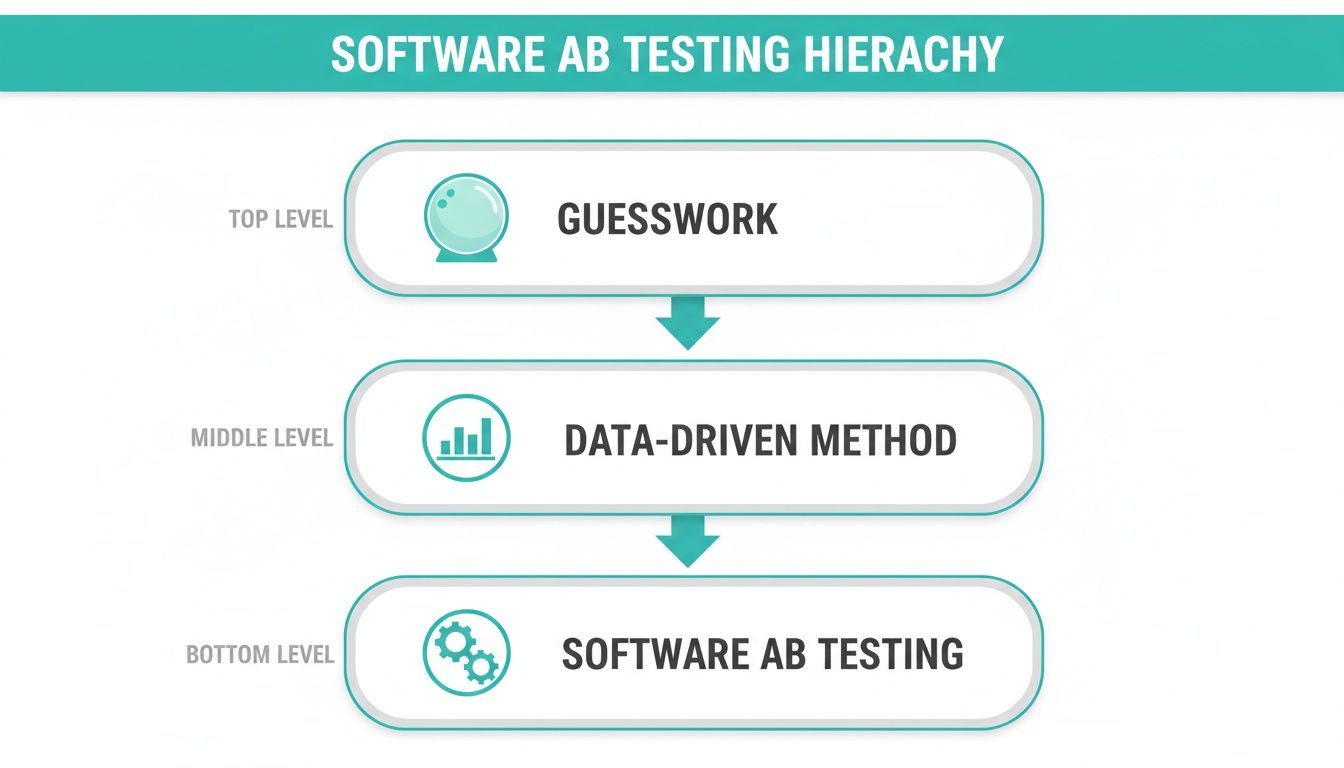

This visual breaks down the hierarchy of optimization—moving from pure guesswork to a methodical, software-driven approach.

It shows the journey from just hoping for the best to using a systematic, software-powered engine for continuous improvement.

Experiment design and visual editing

First up is experiment design. If you need to file a ticket with a developer and wait three days just to change the text on a button, you’ve already lost. That friction kills momentum and discourages testing altogether.

A top-tier tool must have a powerful and intuitive visual editor. This is the difference between being empowered and being bottlenecked. You should be able to click on an element—a headline, an image, a form—and create a variation in minutes, not days. This freedom lets your team act on insights quickly and test ideas the moment they arise.

Traffic allocation and control

Next is traffic allocation. This sounds technical, but it’s simple: the software must give you precise control over who sees which version of your test. It needs to split traffic reliably and, most importantly, randomly.

Without proper randomization, you introduce bias that completely invalidates your results. It’s like trying to judge a race where one runner gets a head start. The software’s job is to be the fair, impartial referee, ensuring that the only thing influencing the outcome is the change you’re testing.

The statistical model matters

Here’s where many tools fall flat, and it’s a critical failure. The statistical model is the brain of the operation. Many platforms use basic frequentist methods that can easily produce false positives. They’ll declare a winner with 95% statistical significance, but that doesn't mean there's a 95% chance that B is better than A. It's more complicated and less intuitive than that.

This is where you can be misled into rolling out a change that does nothing—or even hurts performance.

A more robust approach is Bayesian statistics. Instead of giving you a simple yes/no answer, a Bayesian model tells you the probability that Version B is actually better than Version A. This is far more useful for making business decisions.

Knowing there’s an 85% chance your new headline will outperform the old one is a much clearer, more actionable insight. While you can learn more about the differences between multivariate testing vs. A/B testing in our other guide, the core statistical integrity is what separates a professional tool from a toy.

Seamless tracking and analytics

Finally, if your software can’t tell you which version made you more money, what’s the point? Effective software ab testing requires deep integration with your analytics stack. It must connect seamlessly with tools like Google Analytics and, crucially, Google Ads.

It’s not enough to track clicks or even basic conversions. The system needs to track the metrics that actually matter to the business: Cost per Acquisition (CPA), Revenue per Visitor (RPV), and Return on Ad Spend (ROAS). A great tool doesn’t just tell you which button color got more clicks; it tells you which test variant generated the most profit. Anything less is just noise.

Putting theory into practice with Google Ads

Alright, enough theory. Let’s talk about how this actually works with Google Ads, because that’s where the money is made or lost. The goal is simple: message matching. Your ad makes a promise, and your landing page needs to deliver on that promise perfectly. A/B testing is how you perfect that digital handshake.

Think of your Google Ad as the opening line in a conversation and the landing page as the substance of that talk. If they don't connect, the conversation ends abruptly. Your potential customer clicks away, and you just paid for the privilege of annoying them. We can do better.

This alignment between ad and landing page is where most campaigns leak cash. Good software ab testing plugs those leaks by letting you systematically test every part of that user journey, starting with the ad itself.

Testing your AD creative

Inside Google Ads, this is your first and most important battleground. You’re fighting for a click against a sea of competitors. Small changes here can have a massive impact on your Click-Through Rate (CTR) and, by extension, your Quality Score.

- Test your headlines: Pit a benefit-driven headline like ‘Effortless Payroll for Teams’ against a feature-focused one like ‘Automated Tax Filing Included.’ Or try a direct offer, such as ‘Save 20% on Your First Year.’

- Test your descriptions: One description might highlight key features, while another tells a short story about a customer’s success. You never know what will resonate until you test it.

- Test your display URL: Sometimes, a simple, clean URL performs better than one loaded with keywords. It all comes down to what your audience trusts.

The ad is the promise. Now, let’s talk about keeping it.

Testing your landing page experience

Once a user clicks, the landing page has one job: convert them. This is where your software ab testing platform truly shines, giving you the power to test everything a user sees and interacts with. The possibilities are huge, but don't get overwhelmed. Start with the big levers.

Here are some of the most impactful elements you can test on your landing pages:

- The main headline: Does your headline perfectly mirror the ad's promise? Test a direct match against a more creative, intriguing alternative.

- The call-to-action (CTA): This is a classic, but for good reason. Test the button text, the color (high-contrast colors often win), and even its placement on the page.

- The hero image or video: A picture of your product in action might crush a generic stock photo. Or maybe a short video explaining the value prop is the key. You have to test it.

- Social proof: Which is more persuasive? A wall of client logos or two powerful, in-depth customer testimonials? Test different formats and placements.

- Page layout: For a more advanced test, you can pit your standard layout against a completely redesigned version. Sometimes a radical change is needed to unlock a major performance lift.

The process itself is straightforward. You create your variants in the A/B testing software, connect it to your landing page URL, and launch the test. The software then automatically routes traffic from your ads to the different versions.

Beyond the conversion rate

Now for the metrics. Everyone obsesses over Conversion Rate, and for good reason. But looking only at conversions is like judging a football match by the final score without watching the game. You miss the nuance that tells you why you won or lost.

A great software ab testing tool tracks a richer set of KPIs that give you the full picture.

- Cost per Acquisition (CPA): This is the bottom line. A variant might have a slightly lower conversion rate but attract higher-quality leads that cost less to acquire, making it the true winner for profitability.

- Bounce Rate: If Version B has a 30% lower bounce rate than Version A, it’s a clear signal that its message is resonating better, even if the conversions haven't caught up yet. It tells you you're on the right track.

- Average Time on Page: More time on page often indicates higher engagement. Users are reading your copy and considering your offer, which is a strong leading indicator for future conversions.

Tracking these metrics correctly is foundational. If your setup is messy, your test results will be useless. For anyone needing a refresher, you can learn more about how to set up Google Ads conversion tracking properly in our dedicated guide. Get the fundamentals right, and your testing will build real, sustainable growth for your business. 🚀

Why most A/B tests go wrong

Let’s get real for a minute: a huge number of A/B tests are a total waste of time and money. I see it constantly—smart, well-meaning teams run experiments that are broken from the start. They end up with results that are either meaningless or, even worse, flat-out misleading.

They’re basically just guessing, but with more steps and a fancy dashboard.

It's a frustrating loop. You get a powerful A/B testing tool, run what feels like a brilliant experiment, and then bet your budget on bad data. The problem isn’t the software; it’s the strategy. Or, more often, the complete lack of one.

Let's get brutally honest about the common traps people fall into. More importantly, let's talk about how to sidestep them to run tests that actually move the needle. We’ve all made these mistakes. It’s about getting smarter together.

Not enough traffic to matter

The biggest and most common mistake? Running a test with a sample size so small it’s laughable. You can't test two landing pages with 50 visitors each and declare a winner. That’s not data; it’s noise. Any lift you think you see is almost certainly just random chance.

You need enough traffic to reach statistical significance—the point where you can be confident the results aren't a fluke. Most decent software will tell you when you've hit this mark, but here’s a rule of thumb: if your test pages are only getting a handful of conversions a day, your test needs to run for a very long time to be valid.

Calling the test too early

This one is born from pure impatience. You’re two days into a test, Version B is ahead by 10%, and you feel the victory lap coming on. So you shut it down and roll out the winner. Huge mistake. User behavior can swing wildly depending on the day of the week or even the time of day.

A test that’s crushing it on a Tuesday morning might completely tank over the weekend. To get a true picture, you have to let your test run long enough to capture these natural cycles.

Patience isn't just a virtue here; it's a non-negotiable requirement for getting accurate results.

Testing too many things at once

Here's another classic error. In a single variation, you change the headline, swap out the hero image, rewrite the CTA, and tweak the button color. Your new version wins by 15%. Fantastic! But... why?

You have absolutely no idea which of those four changes actually made the difference. Was it the new headline? The more compelling image? You learned that the combination worked, but you didn't learn what works. That’s a massive missed opportunity to understand your customer's psychology.

Start with simple, isolated tests. They build a foundation of knowledge that informs your next, more ambitious experiments. Every test should be a learning opportunity that feeds your future strategy, not just a one-off tactic to snag a quick win.

How AI is scaling A/B testing to the next level

Manually setting up A/B tests for a handful of your most important campaigns is manageable. But what happens when you’re running hundreds, or even thousands, of ad groups for a client?

The answer is simple: you can't do it right. The sheer amount of work makes it a non-starter.

This is where the entire game is changing, and I mean really changing. We're moving from incremental tweaks to exponential growth. The rise of AI-powered platforms is automating this whole process at a scale that was completely unimaginable just a few years ago. We’re moving beyond simple software ab testing and into a new territory altogether.

This explosion in capability is why the A/B testing software market, valued at USD 1.5 billion in 2025, is projected to hit USD 4.4 billion by 2035. This trend, highlighted by researchers at Future Market Insights, shows that tools integrating AI into Google Ads are becoming essential for anyone serious about performance.

From manual A/B to autonomous optimization

Let's break down what this actually looks like.

Instead of you brainstorming two landing page variants for a single ad group, an AI can generate and test thousands of unique combinations for every single keyword. That’s not a typo. It creates bespoke ads and matching landing pages tailored to the specific intent behind each search query.

The system then monitors performance in real-time. It doesn't just run a test for two weeks and give you a report. It dynamically shifts more traffic to the winning variants on the fly, effectively phasing out the losers automatically.

This isn't just A/B testing anymore; it's continuous, autonomous optimization. The machine is always learning, always testing, and always improving, 24/7. It's a fundamental shift from periodic, manual experiments to a state of constant, automated improvement.

This is where the real power lies. Instead of one person trying to juggle a few tests, you have an AI running thousands of micro-experiments simultaneously across your entire account.

The power of compounding gains

The result of this scaled approach is a compounding effect. A small win in one ad group is nice. But when you’re finding and implementing thousands of small wins every single day, the gains stack up incredibly fast. The AI uncovers pockets of performance you would never have the time or resources to find on your own.

This isn't some far-off future; this technology is here now, and it's creating a major gap between those who use it and those who don't. For a deeper look at what's possible, check out our guide on the best AI tools for digital marketing.

Embracing this shift is how you move from just managing campaigns to truly engineering growth. It's an exciting time to be building. 🚀

How to choose the right A/B testing software

Alright, let's talk about picking the right tool for the job. This isn't a small decision—the A/B testing software you choose dictates your workflow, your speed, and ultimately, your results. The market is flooded with options, but the right choice for you won't come from some generic listicle. It has to fit your specific needs.

Here’s a simple, no-nonsense framework to guide you. It’s not about finding the one perfect tool; it’s about finding the right tool for you, right now.

Integration is everything

First, and I can't stress this enough, look at integration. How easily does the software plug into your existing tech stack? If it’s a nightmare to connect with your landing page builder, Google Ads, and analytics platform, you're dead in the water before you even start.

A tool that creates friction is a tool that won’t get used. It needs to feel like a natural extension of your workflow, not another siloed platform you have to fight with every day. The setup should be quick, and the data should flow seamlessly between systems.

Match the tool to your scale

Second, be brutally honest about your scale. Your needs are wildly different depending on where you sit.

An agency managing dozens of clients needs a very different platform than a small business owner testing one or two core landing pages. Trying to use a small-scale tool for an enterprise-level job is like trying to build a skyscraper with a hammer. You need the right equipment for the ambition of the project.

User experience and true value

Third, dig into the user experience (UI). Is the interface clean and intuitive, or do you need a PhD in statistics just to launch a simple test? A clunky UI is a tax on your team’s time and creativity. A great platform gets out of your way and lets you focus on the ideas, not the mechanics.

Finally, look at cost versus value. Don't just pick the cheapest option—that’s a classic rookie mistake. A tool that costs more but delivers a 10x ROI through better insights, automation, or saved time is a much smarter long-term investment.

The market for this kind of software is booming for a reason. In North America, which held the largest market share in 2023, the United States market alone is forecast to reach USD 1.2 billion by 2032. You can learn more about these A/B testing software market trends to see where things are headed. This growth signals that advanced, scalable platforms are becoming crucial for staying competitive.

So, choose a tool that fits your workflow, matches your ambition, and helps you move faster. 🚀

A few A/B testing questions I hear all the time

When it comes to A/B testing in PPC, the same few questions always pop up. I'll get straight to the point so you can start testing with more confidence and less guesswork.

How long should I actually run an A/B test?

The short answer? Aim for at least two full business cycles, which usually means two weeks. This helps you smooth out the weird spikes and dips that happen on Mondays versus Saturdays.

Most testing tools will tell you when you’ve hit statistical significance. Trust that indicator. It’s tempting to call a test early when one version shoots ahead, but that's a classic mistake.

What kind of conversion lift is "good"?

Forget the case studies promising a 300% lift from changing a button color. Those are rare and often misleading.

In the real world, a 5-10% uplift is a solid, sustainable win. It might not sound dramatic, but those small, consistent gains compound over time and drive serious, long-term ROI. Think of it as a series of small, smart wins, not a one-off lottery ticket.

what if my traffic is too low for a clean test?

This is a big one. Running a test on a low-traffic page can feel like trying to get a clear answer in a noisy room. If you don't get enough visitors, reaching statistical significance could take months, and by then, the results might not even be relevant.

Running tiny tests on low-traffic pages is a great way to waste time. The data is often too noisy to trust. If you can't get enough volume, either make a massive change or group assets together to get a real signal.

At the end of the day, a smart setup and a clear understanding of your data matter more than any fancy dashboard. Once you get these fundamentals right, A/B testing software stops being a complicated tool and becomes your best weapon against gut-feel marketing.

It's time to trade maybes for numbers. No more guesswork, just measurable growth.

Ready to scale your PPC wins with automated A/B testing? Try dynares