A/B Testing SEO: a No-BS Guide for Tech Entrepreneurs

A/B testing SEO: a no-BS guide for tech entrepreneurs

Let's be clear: A/B testing for SEO isn't your standard landing page split test. Not even close.

It’s a different beast entirely. We’re not splitting users to see who converts; we’re scientifically measuring how our changes affect Google's perception of our pages. This is about rankings, click-through rates, and organic traffic. We're ditching gut feelings for hard data.

What A/B testing for SEO actually is

Most SEOs get testing completely wrong. They’ll tweak a page, see traffic go up, and pop the champagne, assuming their change was the cause. That’s just correlation. And it’s a dumb way to build a strategy. You have no real idea if your change worked, if a Google update rolled out, or if it was just a random Tuesday.

Real A/B testing for SEO is about isolating your changes to get clean, undeniable data. It's the only way to know for sure that your efforts are actually moving the needle.

It's about pages, not people

Unlike conversion rate optimization (CRO) where you show different page versions to different users, a proper SEO test splits pages. You take a big group of similar pages—think product categories, location pages, or blog posts—and divide them into two camps:

- Control group: These are the original, untouched pages. They act as your baseline for performance.

- Variant group: This is where you apply your change. A new title tag format, different H1s, updated structured data—whatever your hypothesis is.

Both groups stay live for Googlebot to crawl and index. Then you measure what happens. It's the difference between saying 'I think this worked' and 'I have data proving this change drove a 15% uplift in organic sessions.'

Proving causality, not correlation

The magic here is seeing how your variant group performs against what it was predicted to do, based on the control group's behavior. Smart testing platforms use historical data to build a forecast for your test pages. This method separates the impact of your changes from all the external noise, like algorithm updates or seasonal trends.

This is fundamentally different from a standard split test. We're often analyzing over 100 days of historical data to accurately forecast organic sessions. While a broad study might tell you top-ranking pages have 2,000 words, only a true SEO test proves if expanding your content actually causes a traffic lift—which can easily be 10-25% sitewide when you find a winner. If you want to dive deeper into the stats, SearchPilot has a great write-up on their causality models.

The goal isn't just to see which page gets more traffic. The goal is to prove, with statistical confidence, that your change caused an increase in organic performance. This is how scalable businesses operate. We don't guess. We test, measure, and build on a foundation of data. So, let's ditch the guesswork and get into how it’s actually done.

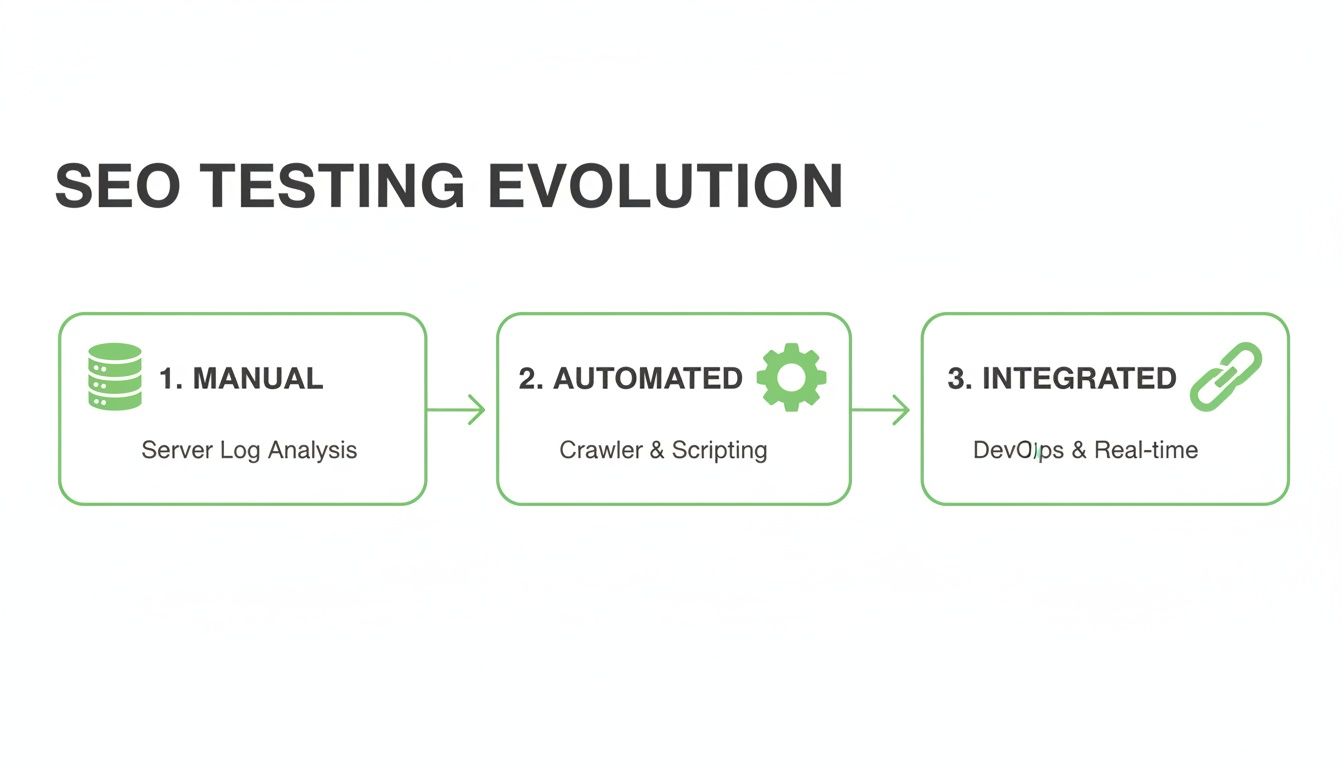

The evolution of SEO experimentation

To really understand where A/B testing for SEO is headed, you have to appreciate just how messy its history is. It wasn't always this clean. For a long time, it was a total hack job.

Think back to the mid-90s, the wild west of the internet. Experimentation was a brutal, manual slog. If you wanted to test something, you had to be a tech wizard who could dig through server logs and Frankenstein together some JavaScript redirects just to get a blurry picture of what users were doing. It was the dark ages of optimization, built on pure guesswork.

The game started to change in March 2006 when Google launched its Website Optimizer. This was a huge deal. Suddenly, marketers had a free tool that could automate split tests for things like headlines and layouts. For SEOs, this was the moment the door creaked open for data-driven optimization. You could finally test meta titles and descriptions to see what actually drove more clicks from search. You can read more about the journey of A/B testing on mcmillanphillips.com.

From simple tools to big business

But that was just the start. The real 'aha' moment for me, as an entrepreneur, was learning about Google's own internal experiments. In 2009, they famously tested 41 different shades of blue for their ad links. The winner—a shade almost imperceptible to the naked eye—was credited with boosting clicks enough to generate an estimated $200 million in extra annual revenue.

That’s the power we're talking about. This isn't just a fun fact; it's a profound lesson in the compounding value of tiny, data-driven optimizations. It’s what inspired us when we started building dynares. We saw this evolution—from manual hacks to the first automated platforms—and realized there was still a huge gap. Teams were spending weeks of engineering time on tests that should have taken minutes.

That’s why we built a platform to automate what used to be a massive resource drain. It’s about making this level of experimentation accessible so your team can focus on strategy, not just the technical setup. For a deeper dive into the different methods you can use, check out our guide on multivariate testing vs A/B testing. This history connects the dots from clumsy beginnings to the AI-powered automation we have today. It proves why embracing A/B testing for SEO is non-negotiable for anyone serious about growth. This isn't just a 'nice-to-have' anymore; it's a core business function.

Setting up an SEO test that actually works

Alright, let's get down to it. You can't just throw a change at the wall and see what sticks. A real A/B testing SEO strategy needs a deliberate, careful setup if you want clean data you can actually trust. Rushing this part is the fastest way to get noisy results and waste weeks of effort.

First things first: decide what you’re testing. The classic rookie mistake is changing ten things at once and having no idea what actually caused the shift. Pick one variable. Some of the best, most direct tests you can run hit the on-page elements that directly influence clicks from the SERP. For instance, getting good at how to write meta descriptions for SEO is a fundamental skill you can and should test.

Other great candidates for your first test include:

- Title tag structures: Test different formats. Try putting the brand name at the front instead of the back, or experiment with more question-based phrasing.

- H1 headings: Does a direct, benefit-driven H1 move the needle on engagement and rankings compared to a simple keyword-stuffed one? Find out.

- Structured data: Does adding

FAQPageschema actually improve your SERP footprint and click-through rate? Test it. Don't just follow a blog post's advice blindly.

Choose your pages wisely

Next up, you have to pick the right group of pages for the experiment. This is a critical step. The whole game is about finding a cluster of similar pages—think product detail pages, location pages, or blog posts in the same category—that have enough stable traffic to give you a reliable forecast. You'll want at least 30-50 pages in your test group to have enough statistical power. Anything less and the daily noise can easily drown out your signal.

This evolution is exactly why we can now run complex tests across hundreds of pages at once—something that used to be a massive engineering headache.

Once you have your group, you split it into a control and a variant. Don't get fancy here; a 50/50 split is almost always the right move. The idea is to create two buckets of pages that have historically performed almost identically. That way, you can be confident that any difference you see is because of your change, not random chance. If you're looking for new keyword opportunities to build page groups around, our guide on how to perform keyword research can help you spot them.

The technical setup is non-negotiable

This is where a lot of SEO tests fall apart. The technical implementation has to be flawless, and for SEO, that means one thing: server-side rendering. The change has to be made on the server before the page is delivered to the browser and the bot.

Why is this so non-negotiable? Because you need Googlebot to see the change instantly and every single time it crawls. Client-side rendering, where JavaScript changes the page in the user's browser, is a complete disaster for SEO testing. Googlebot might see the original version, a user might see the new one, and your data becomes an absolute train wreck. Just don't do it.

Finally, check your canonicals. When you have two versions of a page (even when split into groups like this), you need to make sure you’re signaling the correct definitive version to Google to sidestep any duplicate content confusion. Any decent testing platform will handle this automatically, but it's on you to verify it's working correctly. This grounded, technically sound approach is what separates the pros from the people just messing around.

Measuring SEO tests: how to know if you’re actually winning

Running the test is easy. The hard part is not lying to yourself about the results. It’s incredibly tempting to see a little green arrow after three days, declare victory, and pop the champagne. But most of the time, that’s just noise. Proper measurement is what separates a lucky guess from a repeatable win. Let's get into what you should actually be looking at to make a real call.

First, nail your primary metrics

Your north star for any SEO experiment is almost always going to be organic sessions or clicks from Google Search Console. This is the top-line number. Did more people from organic search land on your pages because of the change? Simple as that. But if you stop there, you're flying half-blind. A traffic lift is great, but why did it happen? The real insights live one layer deeper.

Then, dig into secondary metrics

The primary metric tells you what happened. These secondary metrics tell you how it happened. For any serious A/B testing SEO analysis, you absolutely need to track these.

- Impressions: Are your pages showing up in search more often? An increase here can mean Google is starting to see your content as relevant for a broader set of queries.

- Click-through rate (CTR): This one is huge. If your impressions are flat but clicks are up, it’s a massive signal that your new title or meta description is doing a much better job of grabbing attention on the SERP.

- Average rank: Did your position for target keywords actually go up? This is the most direct feedback you can get on how Google is re-evaluating your page’s authority.

Now, let's connect this to what the business actually cares about. A 15% traffic uplift that kills your conversion rate is not a win; it's a liability. Tracking the money metrics is non-negotiable. If you need a solid framework for tying marketing actions to real financial results, our guide on how to measure marketing ROI breaks it down. Looking at these together stops you from celebrating a traffic win that secretly torpedoed your revenue.

The rules of the road: statistics and timing

This isn't just about watching charts. You have to apply some basic statistical rules, or you’ll end up making decisions based on random chance. A test result without statistical significance is just an opinion. Don’t bet your business on opinions. I never call a test without reaching at least a 95% confidence level. This gives you certainty that the result you’re seeing isn't just a fluke caused by random daily fluctuations.

The other rookie mistake is calling a test too early. Running an experiment for just one week is almost always a bad idea. You haven't accounted for natural weekly cycles—the way people search on a Tuesday is completely different from a Saturday. My rule of thumb is to run tests for a minimum of two full weeks. For most significant tests, I let them cook for four to six weeks. This gives Google’s crawlers enough time to find, process, and react to your changes, and it gives you enough data to hit that crucial statistical significance.

When to pull the plug or go all in

Before you launch anything, you need to define your win-loss conditions. Write them down. Be ruthless. If a test is clearly losing after two weeks and the numbers are ugly, kill it. Don't let it run for another two weeks just because that was the plan. Cut your losses and move on to the next idea. But when you find a clear winner? Roll it out with confidence, book the win, and start applying those learnings across the rest of your site.

Integrating your PPC and SEO wins

Running your SEO tests in a vacuum is one of the biggest and most common mistakes you can make. It’s a complete waste of potential. Your organic and paid search teams are working on the same SERP, targeting the same customers. If they aren't informing each other, you're leaving money on the table. This is where we connect the dots and build a real feedback loop.

Imagine you run an SEO test and find a new title tag that boosts your organic CTR by 10%. What's the next move? You don't just roll it out and pat yourself on the back. You immediately take that winning message and test it in your Google Ads headlines. This is how you stop doing random acts of marketing and start building a real growth engine.

Creating a unified growth strategy

Coordinating your PPC and SEO experiments is how you stop competing against yourself. Of course, to do this right, you have to understand the fundamental differences between organic and paid channels. This article on SEO vs Paid Ads is a great primer if you need to get everyone on the same page.

This integrated approach is exactly why we built dynares the way we did. We kept seeing marketers running completely disjointed campaigns, with SEO teams and PPC managers barely speaking to each other. It’s just so inefficient. Our platform is built around that core philosophy of integration. The idea is simple: you provide your brand guidelines and keywords, and the system generates coordinated ads and high-intent landing pages that actually work together. It’s all about creating a consistent, optimized experience from the SERP click straight through to the conversion.

The power of an integrated platform

Traditional SEO updates are a gamble. You roll out a bunch of meta tag tweaks based on a hunch, and if it flops, your rankings can tank for weeks or even months. A proper A/B testing SEO strategy de-risks this whole process. By testing your changes on a small subset of pages first—say, 10-20% of a URL category—you can validate a 5-15% visibility gain before a full rollout. This minimizes your risk while building a library of proven wins.

This is where a platform designed for synergy really shines. Our Auto A/B Testing feature, for example, doesn't just optimize for a single channel. It's built to find the winning variants that lift performance across the board. The synergy works both ways, too. You can use PPC data to quickly validate messaging before committing to a longer, more resource-intensive SEO test. If you want to dive deeper into this relationship, check out our guide on how PPC and SEO can work together. This integrated approach is how you stop wasting money and start building a scalable, efficient growth machine. It’s about making your channels work for each other, not in spite of each other.

Common questions about A/B testing SEO

If you’re just getting into SEO A/B testing, you’ve probably got some questions. And let's be real—you probably have some fears, too. There’s a lot of noise and bad advice out there. So let’s cut to the chase and tackle the big questions I hear all the time.

How long should I run an SEO A/B test?

The short answer? Almost always longer than you want to. Patience is the name of the game here. Anyone telling you to run a test for a few days and call it a win doesn't understand how this works. You're just measuring random noise at that point.

You need to run a test for at least two full business cycles to see how performance changes between weekdays and weekends. That puts you at a bare minimum of two weeks. For most of the tests I run, I’m looking at a four to six-week window. This gives Google enough time to crawl, index, and process the changes, and it gives you enough clean data to reach actual statistical significance.

Can A/B testing SEO hurt my rankings?

Let’s be direct: yes, if you do it wrong, you can absolutely do damage. This isn't something to dive into without knowing the risks. The biggest mistakes are cloaking (showing different content to Googlebot than to users) or accidentally creating a massive duplicate content problem. This is exactly why a proper server-side testing setup and the correct use of canonical tags are non-negotiable. Get that part wrong, and you're just asking for a penalty.

But when you set it up correctly with a professional testing tool, the risk is incredibly low. Here’s why:

- You’re only running the experiment on a small, controlled group of pages, not your entire domain.

- You can watch the results in near real-time and spot problems immediately.

- If a test shows a negative impact, you can roll it back instantly with a single click.

The risk of not testing and just shipping a sitewide change based on a gut feeling is far, far greater. One bad, untested idea pushed live can do infinitely more damage than a controlled experiment ever could.

What's a good uplift to expect from a single test?

Don’t get caught up chasing home runs. That’s not how you win at SEO testing. The real goal is to build a system for consistent, validated growth. Most of your successful tests will probably deliver a 2-10% uplift in organic traffic for the tested pages. That might not sound massive, but the power is in aggregation. Stringing together these small, proven wins across your site is what leads to huge compound growth over a year.

Every so often, you’ll hit a big one—a 15% or even 20%+ lift. Those are great. But the sustainable, long-term strategy is built on a steady stream of validated improvements, not a desperate search for one silver bullet.

At dynares, we built our platform to make this level of sophisticated testing accessible. We help you coordinate PPC and SEO wins to build a truly efficient growth engine. Find out how we can help you scale your campaigns.

Create reusable, modular page layouts that adapt to each keyword. Consistent, branded, scalable.

From ad strategy breakdowns to AI-first marketing playbooks—our blog gives you the frameworks, tactics, and ideas you need to win more with less spend.

Discover Blogour platform to drive data-backed decisions.